|

The advent of many technologies, such as virtual and augmented reality and 3D fabrication technology, has formed opportunities for enhancing object-based learning. These technologies allow educators to replicate tactile and virtual models of museum artifacts to engage students in exploring and analyzing the replicas of original artifact. However, the availability of such technologies is not enough for fostering object- based learning. In this project, we studied learners’ interactions while using different object-based learning platforms. Specifically, we compared how users interact with 3D printed replicas of museum artifacts, holographic replicas, and 3D digital models displayed on a screen.

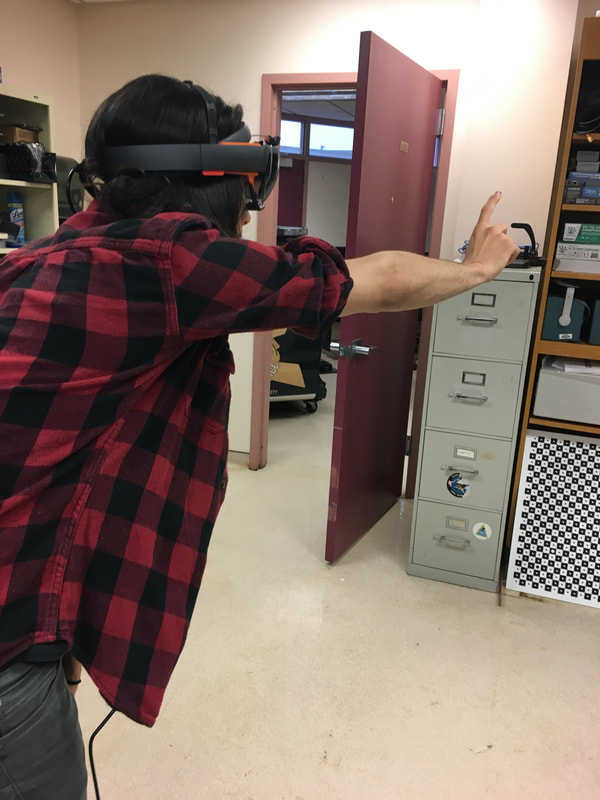

We conducted an experiment in which each participant experienced object-based learning about an item using one of the three technologies (AR device, computer, 3D printed). We asked participants to wear a head-mounted eye tracker and collected data about where they looked at during the experiment and for how long, and their pupil size. Participants also answered a few questions about what they learned about the inventory items. I developed a computational model to categorize where the participant was looking at based on this data and conducted a thematic analysis to study the emerging themes from their responses about what they learned about the inventory. I ran statistics to compare the three technologies to see if they differed in terms of visual behavior and learning outcomes. |

|

Google docs for HoloLens! The goal of this project was connecting multiple HoloLenses such that their users share the same AR space despite wearing different devices. I developed an application in Unity that consists of an interface in which any user can see the changes other users are making in the shared space. They can also track each other's gaze to see where other users are looking at. I also extended this application to the HoloMuse project so users can experience a collaborative augmented museum visit.

|

|

This video shows the viewpoint of one user wearing a HoloLens while another user is manipulating an augmented artifact. Both HoloLenses are equipped with an eye tracker add-on, so the cube showing the gaze target of the other user pictures where they are exactly looking at (as opposed to the raw data from HoloLens which only indicates where the user's head is pointing at)

|